The landscape of e-commerce navigation has shifted from rigid keyword matching to fluid, intent-based discovery. By 2026, AI product search in shopping apps is no longer a luxury feature; it is the baseline for user retention. Modern shoppers expect apps to understand "a dress for a summer wedding in Tuscany" just as easily as "blue maxi dress."

This guide is designed for developers and product architects tasked with building or upgrading search systems to meet 2026 standards. We will move beyond basic LLM wrappers to explore the integration of vector embeddings, real-time personalization, and the infrastructure required to support millions of concurrent queries.

The 2026 State of Search: From Keywords to Context

Traditional lexical search, relying on Exact Match or BM25 algorithms, is hitting its ceiling. In 2026, the industry has pivoted toward Semantic Search and Multimodal Input. This shift addresses the "null results" problem that plagued mobile commerce for a decade.

Current data from retail technology audits in 2025 indicates that users are 3x more likely to convert when they can search using natural language or images. The "Search Bar" is evolving into a "Discovery Hub" where the AI interprets:

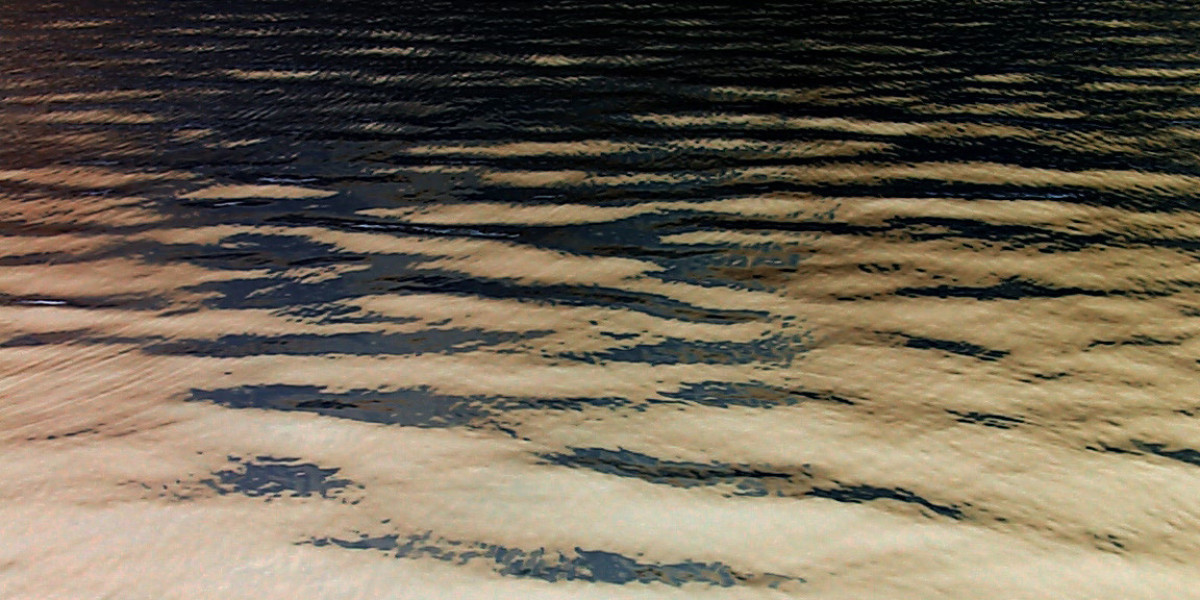

Visual Intent: Analyzing a screenshot to find similar textures and patterns.

Contextual Intent: Factoring in the user’s location, past purchases, and current season.

Vague Intent: Handling queries like "something to fix a leaky faucet" by mapping to specific product categories and tutorials.

For teams specializing in Mobile App Development in Georgia, the challenge lies in balancing these heavy computational requirements with the low-latency expectations of mobile users.

Core Architecture: The Multimodal Vector Pipeline

To implement a 2026-ready search, you must move away from flat database queries toward a pipeline that handles high-dimensional data.

1. The Embedding Layer

Every product in your catalog—including its title, description, reviews, and images—must be converted into a numerical representation called a vector embedding. In 2026, state-of-the-art models like CLIP (Contrastive Language-Image Pre-training) or proprietary retail-tuned transformers are used to ensure that a photo of a shoe and the text "breathable running footwear" occupy the same "space" in your database.

2. The Vector Database

Standard SQL databases are inefficient for calculating the "distance" between millions of vectors. Success in 2026 requires a dedicated vector database (like Pinecone, Milvus, or Weaviate) or a high-performance vector plugin for PostgreSQL (pgvector). This allows for Approximate Nearest Neighbor (ANN) searches, returning results in milliseconds.

3. Real-Time Reranking

Initial vector search provides relevance, but it doesn't provide "business logic." A secondary reranking layer is necessary to prioritize:

Inventory levels (don't show out-of-stock items first).

Profit margins.

User-specific preferences (favorite colors or brands).

Implementation Strategy: Step-by-Step

Phase 1: Data Enrichment

AI is only as good as the data it consumes. You must automate the generation of "alt-text" and technical attributes for your catalog. Use a vision-language model to scan product images and generate tags that the manufacturer might have missed, such as "bohemian style" or "minimalist aesthetic."

Phase 2: Integrating Multimodal Input

Allow users to upload photos or use their camera directly within the search bar. This requires a mobile-side optimization strategy to compress images before they hit your API, ensuring the search feels instantaneous even on 4G networks.

Phase 3: Conversational Refinement

If a search is too broad, the AI should ask clarifying questions.

User: "I need a new laptop."

AI: "Are you looking for something for professional video editing, or a lightweight model for travel?"

This mirrors the "Guided Selling" approach that has become a staple of BOFU (Bottom of Funnel) conversion strategies in 2026. For developers also looking at the financial backend of these apps, understanding crypto wallet app development 2026 security is essential for integrating modern payment search filters, such as "items purchasable with ETH."

AI Tools and Resources

Pinecone Serverless — A vector database that scales based on query volume.

Best for: Managing high-dimensional product embeddings without managing infrastructure.

Why it matters: It drastically reduces the "cold start" cost for SMBs entering the AI search space.

Who should skip it: Enterprise clients with strict data residency requirements who need on-premise deployments.

2026 status: Widely adopted as the industry standard for retail vector storage.

Google Vertex AI Search for Retail — A managed suite for implementing "Google-quality" search.

Best for: Rapid deployment of semantic search and recommendations.

Why it matters: Includes built-in models specifically trained on retail behavior patterns.

Who should skip it: Developers who require deep customization of the underlying ranking algorithms.

2026 status: Updated with 2026 LLM capabilities for better conversational understanding.

Cohere Rerank — A specialized endpoint for improving search result relevancy.

Best for: Adding a sophisticated "ranking" layer on top of existing keyword or vector search.

Why it matters: Bridges the gap between "mathematically similar" and "actually what the user wants."

Who should skip it: Very small catalogs where simple sorting suffices.

2026 status: Current version supports cross-lingual reranking for global shopping apps.

Risks, Trade-offs, and Limitations

While AI search is transformative, it introduces new failure modes that traditional systems did not face.

When AI Search Fails: The "Semantic Hallucination" Scenario

In this scenario, the vector search finds a "mathematical match" that is contextually absurd. For example, a user searches for "poison green dress," and the AI, focusing on the word "poison," begins suggesting pest control products alongside apparel.

Warning signs:

High "bounce rates" from the search results page.

Users repeatedly hitting "back" and slightly altering the same query.

Zero-click sessions on highly specific long-tail queries.

Why it happens: The model is over-weighting a specific token (the noun "poison") rather than the intent (the color "poison green"). This occurs when the embedding model lacks domain-specific training in fashion or retail terminology.

Alternative approach: Implement a Hybrid Search model. Combine vector search (for meaning) with traditional keyword filtering (for specific attributes like color, brand, or SKU). Use a "threshold gate" where results must pass both a semantic and a lexical check before being displayed to the user.

Key Takeaways for 2026

Ditch the Keywords: Move toward vector-based semantic search to capture user intent and reduce "zero-result" screens.

Prioritize Latency: Mobile users in 2026 expect 200ms response times; use edge computing and optimized embedding models to achieve this.

Hybrid is Safer: Pure AI search can hallucinate; always back your vector results with a layer of hard-coded business logic and keyword constraints.

Personalization is Native: By 2026, search results that don't account for a user's size, style, or past behavior are considered broken.